It’s been a long time since I’ve posted anything here. Or anywhere, really. Except my private Twitter account, where only a small number of people get to see my small number of tweets. My personal life over the last few years has been such that, for now, keeping a low profile is appropriate. But the select few followers of that locked account have provided me an outlet, when one is required, for the thoughts that refuse to stay in my head. And even when I’m not actively tweeting, the knowledge that they’re there has helped in no small way to keep me sane when I could very easily have fallen apart.

Those days, it seems, are now numbered.

Twitter’s new billionaire owner, the racist, transphobic, inhuman product of every privilege afforded to his rich white family by apartheid, is quickly burning down the house he just bought on a whim. He’s spent the last couple of weeks firing all the people who kept the machine running, without due process and therefore without any of the carefully planned handovers that are required when key personnel leave a technology company. He is no doubt going to lose a lot of costly legal battles over the coming months as the direct victims of his “management” style assert their rights in numerous jurisdictions (he’s in for a shock if he thinks employment law is universal), and I think it can be taken for granted that a great many of those who haven’t yet lost their job (or quit in protest) are now actively looking for a new one anyway. A complex infrastructure that isn’t backed by knowledge and experience is in dire peril, always just one unforeseen incident away from a potentially unrecoverable meltdown.

At the same time, the overgrown toddler has alienated the very people that give the platform its capital value: users and advertisers. Without the users, a social network is nothing. Users provide the content. We choose to provide that content in exchange for access to the platform – an arrangement that is equitable enough for most people. The interactions between users create a community that attracts new members, often without the company having to spend a penny on promotion. Advertisers, in turn, are paying for access to the community. And they demand two important things along with that:

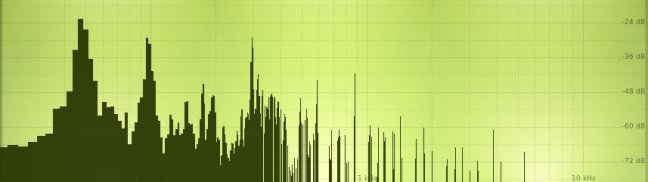

Firstly, they want to know that their brand(s) will be promoted to as many relevant users as possible. Just as you probably wouldn’t waste your time trying to market condoms in the Vatican, companies want to know that the money they’re paying is going to result in actual sales. This is the job of the Algorithm. Designing such algorithms is hard. You don’t just buy a copy of Learn Python in 59 Seconds and come away knowing how to do this stuff, even if you buy a copy of Psychology for Nitwits along with it. Getting to the point where brands can submit an ad and be pretty sure of a decent conversion rate (without being blatantly unethical) has been an evolutionary process, the work of many, many people over a long period. And as anyone who has worked with that sort of organic system knows, when the ones who understand how it works are gone, you poke around inside it at your peril. And when the boss is a playground bully demanding unreasonable changes from too few staff on unrealistic timescales, you’d better believe something is going to break.

But of course it’s worse than that, because as a simple matter of numbers, if your users are fleeing then fewer people are going to see the ads at all. Which means that even if the conversion rate remains static (and it’s not going to improve), advertisers are going to see a drop in sales. The output of any algorithm is only as good as its input. Which leads onto the second demand: Brands must never be allowed to become associated with Bad Stuff. Ensuring this is the job of content moderators, who must enforce carefully balanced rules of behaviour.

Twitter already had a problem in that department, with certain forms of abuse (notably transphobia) being routinely ignored, and disinformation spreading faster than a fart in an elevator. They had started to tackle some of these issues, with some particularly egregious transgressors being banned, and fact-checking mechanisms put in place. Now they are ruled by a dictator who claims to champion “free speech”, but who defines that as having the freedom to be as nasty and dishonest as he wants – as long as nobody uses that same “freedom” to criticise him, of course.

No sooner had the hateful narcissist entered the building than the rate of posting of a well-known racial slur went through the roof, as the scum of the community – previously held in check to some extent by the rules – tested the water to see just how much they could get away with. As the man himself said: let that sink in. Without having to change a single rule, simply by stating his own, twisted viewpoint, he was able to make Twitter a significantly less safe environment for millions of users. The moderators, if there are even any left at this point, can’t effectively enforce policies that are directly contradicted by their boss. This means that the “average” tweet is now much more likely to be toxic, not least to advertisers. And the more of them there are, the more chance that a promoted tweet is going to be juxtaposed, or associated, with one that has the capacity to seriously damage the brand. And that’s before considering the offensive odour generated by the rabid ondatra zibethicus at the top.

I’m currently seeing almost no ads on my timeline. I don’t know how typical this is yet, but it certainly suggests to me that advertisers that the Algorithm would normally pick to promote to me have already decided they’d rather spend their money elsewhere (assuming someone’s not already broken it). Many more will follow. Twitter was already unprofitable; I’d be surprised if it can survive a massive drop in income. The emerald eejit’s brilliant idea – replacing account verification with a pseudo-protection racket – will not only not come close to offsetting the lost revenue, but it makes the platform even less attractive to both users and advertisers. There’s already been a deluge of new “verified” accounts with the capacity to cause chaos for brand managers everywhere, and to make sorting the truth from the chaff near impossible. The trust is well and truly broken.

If you know my personal politics it might seem odd for me to be talking about things in largely capitalist terms. But the demise of Twitter at the hands of an escaped lab experiment with more money than sense (or ethics) is fundamentally a capitalist phenomenon. It could only happen like this in a society that allows individuals to accrue disproportionate power by material acquisition. And while to the users Twitter is a platform and a community, underneath that it’s your typical, financially underperforming tech business, ever on the edge of bankruptcy, that manages to keep the lights on by maintaining cash flow and promising to make a profit eventually. Take away the cash flow, and the lights go off. Some vestigial version of the platform may remain, albeit without the engaging content or enough money available to continue functioning well, but I can say quite confidently that its spiral into irrelevance cannot be averted at this point.

I referred to the screwed employees earlier as direct victims, because there are also countless indirect victims. They are the community. Or more correctly, communities. Twitter has never been a perfect platform, precisely because of its centralised, power-imbalanced, capitalist nature. But it somehow became a place where minorities, and people with shared interests, would find kindred spirits and become a greater, more supportive whole. While so-called influencers might just have been there to chase clout (though Instagram and TikTok cater to them better these days), many others were there to make real connections with people, to share and spread knowledge, to pursue social justice, and more. For all the loudmouths with millions of followers, the real joy of Twitter was in having a circle of friends you’d probably never have met offline, especially during a global pandemic that has forced us to reëvaluate how socialising works in a suddenly much more dangerous world. Were it not for Twitter, I would not have met my best friend, and my life would be very much the worse for it.

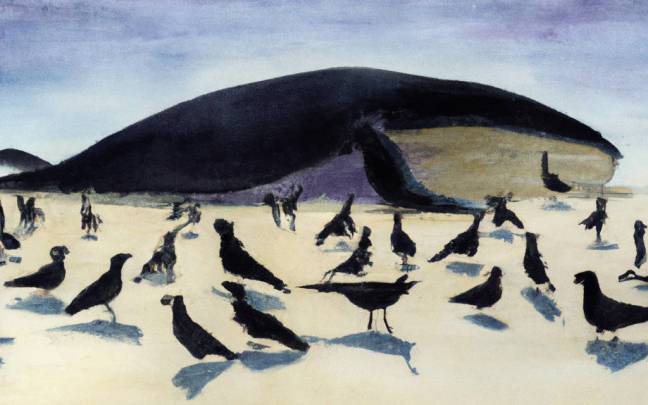

All that has changed now. A great many good people have left. Others have stayed, either out of misplaced hope, lack of a clear alternative, or simply an inability to avert their gaze from the train wreck. But there’s a palpable change in the atmosphere; the air has become noxious and the communities are evaporating. With talk of a paywall, plummeting income, the loss of critical expertise, and active encouragement of toxic behaviour by a thin-skinned, spoiled brat whose genius-level business plan is “do lots of dumb things” †, Twitter is now on life support. All good things, it seems, must indeed come to an end.

Along with many others, I’ve decided to create a personal Mastodon account now, before the bird finally falls off its perch. The main reason I hadn’t done so earlier is that I couldn’t take my friends with me; however, that has become moot. Like anyone settling into a new home, I hope to be accepted by the neighbours, but we refugees have a responsibility to be good citizens too. I’ve had more than my share of antisocial jerks living next door to me and, just as I’ve learned to stand up to them in meatspace, I would totally deserve the pushback (or indeed a ban) if I barged into an existing online community and took a huge dump on their virtual carpet.

The Fediverse is not Twitter. And that’s a good thing. It is a multicultural, heterogeneous network; there are many different but interconnected platforms, of which Mastodon is only one. And while you can easily follow and interact with many other users regardless of where they are on that network, every instance/server hosts its own community, with its own identity and social contract. This is something we absolutely must respect. Coming from a mixed space where all discussions have equal priority, content warnings are rare (and frequently pointless), and friendship and hostility can be found in equal measure, there is the risk that we’ll bring with us an attitude of assertiveness that may have been necessary there, but runs counter to the culture of the community we’ve joined. Let’s not do that.

So to begin with, I’m not going to post much. I’ll just get the lie of the land and learn how the locals would like me to behave. For now I’ve picked a place that claims that Nazis and bigots aren’t welcome, and already it’s clear that life is much more peaceful there. If for some reason that particular local community turns out not to be a good fit for me, the beauty of federation is that I can move to another instance and take my connections with me. Either way, I suspect that sooner rather than later I’ll be so used to village life that I’ll stop shuttling back to the city, and I’ll wonder why it took me so long to leave.